A Fortune 500 enterprise must implement sentiment evaluation throughout buyer help tickets, product evaluations, and social media mentions. This situation illustrates the paradigm shift from “construct vs. purchase” to “configure vs. code.”

Organizations can strategy AI implementation in 3 ways: constructing customized integrations instantly in opposition to mannequin supplier APIs, buying separate per-vendor SaaS options, or configuring a unified visible platform that abstracts these integrations behind a single orchestration layer. Every strategy carries distinct tradeoffs throughout API administration, authentication, charge limiting, and error dealing with — whereas customized builds require groups to implement every of those issues manually per supplier, visible platforms consolidate them right into a single abstraction, shifting upkeep accountability to the platform and permitting groups to deal with workflow logic fairly than infrastructure.

Organizations making an attempt to juggle quite a few AI fashions and providers face a important query: How do you architect no-code platforms with out sacrificing the technical management builders demand? The technical structure beneath visible abstractions presents distinctive engineering challenges that require subtle options.

The Technical Structure Behind Visible Abstractions

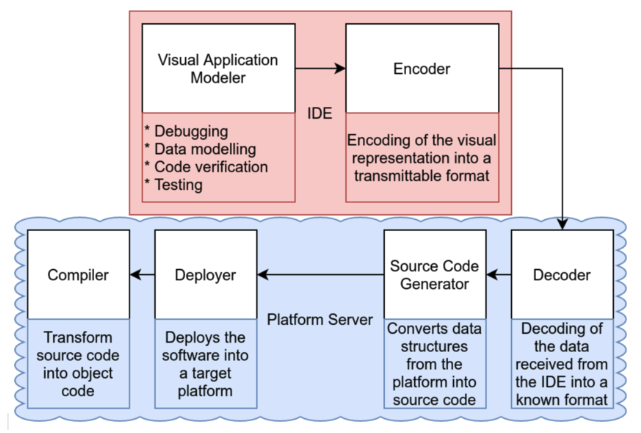

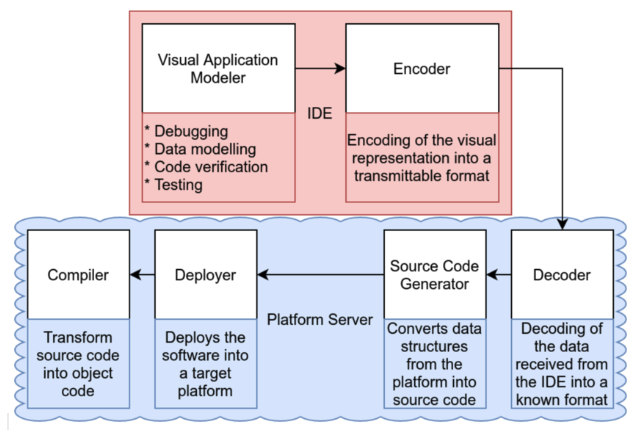

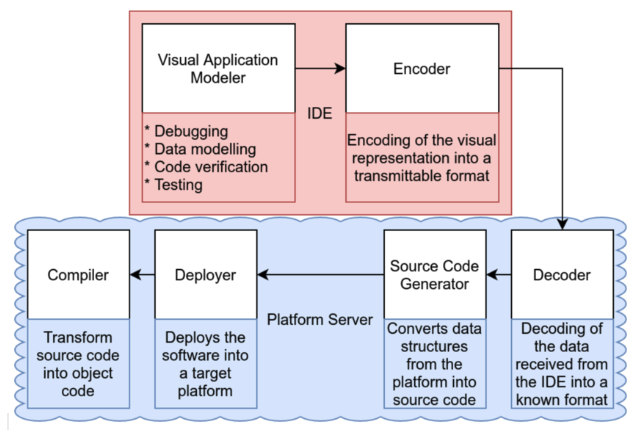

No-code AI platforms should summary mannequin coaching and orchestration into visible builders whereas preserving the total spectrum of underlying AI capabilities. Efficient platforms implement a multi-layer structure following the common structure sample for low-code platforms, the place an API gateway/dealer layer terminates authentication, applies charge limits, and routes every canvas block to the suitable mannequin microservice.

This determine illustrates the multi-layer structure for AI workflow orchestration from visible abstraction to mannequin deployment. Supply: ResearchGate

LLM orchestration frameworks, akin to LangChain and LangGraph, expose higher-level primitives (prompts, reminiscence, instruments) that map instantly onto drag-and-drop nodes. n8n exemplifies this strategy with intensive AI nodes built-in with LangChain, demonstrating how platforms can obtain AI-native structure whereas sustaining visible simplicity.

This creates declarative configuration approaches the place visible workflows generate JSON or YAML configurations describing desired states fairly than crucial code, permitting platforms to optimize execution paths behind the scenes.

Abstraction introduces trade-offs. Drag-and-drop interfaces present accessibility however can restrict fine-grained management over parameters. Refined platforms deal with this by progressive disclosure rules, the place complexity is revealed based mostly on the consumer’s stage of experience.

Multi-Mannequin Orchestration Challenges

Coordinating a number of AI providers concurrently creates complexity. Multi-agent orchestration requires clever routing methods that analyze intent and route queries to optimum fashions based mostly on semantic understanding, efficiency metrics, and value concerns.

State administration presents essentially the most vital problem. Coordinating a number of AI fashions requires dealing with distributed transaction administration throughout providers whereas sustaining dialog state and implementing reminiscence hierarchies that stability short-term and long-term necessities.

Zapier’s enterprise success illustrates scalable orchestration, with their AI adoption reaching 89%, whereas enterprise prospects have reported resolving 28% of IT tickets mechanically with only a 3-person workforce supporting 1,700 workers.

Platform Engineering Commerce-offs

Balancing accessibility with technical management creates a central rigidity in no-code AI platform design. Profitable platforms implement hybrid approaches that help each visible improvement and code-based customization, guaranteeing all visible capabilities stay accessible programmatically by API-first design rules.

Integration patterns that stop technical debt require cautious consideration: model management integration, the place visible workflows serialize to Git-compatible codecs, API contract enforcement utilizing standardized specs, and a modular structure that encourages reusable parts over monolithic design.

Widespread edge circumstances that problem no-code platforms embrace advanced conditional logic requiring intricate branching past visible illustration, high-frequency real-time processing that exceeds visible abstraction capabilities, and dynamic workflow technology based mostly on runtime circumstances. Platform design should anticipate these situations by escape hatches and hybrid execution fashions.

Developer Expertise Issues

Enterprise adoption depends upon technical capabilities that protect flexibility whereas offering accessibility. Important necessities embrace complete API protection with help for REST, GraphQL, and WebSocket, in addition to enterprise authentication options akin to SSO and OAuth 2.0, and parity with native improvement environments when it comes to testing capabilities.

Debugging and observability turn out to be notably difficult when workflows span a number of AI providers. Distributed tracing utilizing OpenTelemetry requirements offers request monitoring throughout providers, whereas real-time monitoring dashboards and AI-powered root trigger evaluation can determine points inside seconds.

Extension mechanisms that work finest embrace customized element SDKs with complete documentation, plugin architectures for dynamic extension loading, and webhook methods for exterior integrations.

Future Technical Instructions

The AI orchestration platform structure evolves quickly to help multimodal AI integration. Unified multimodal interfaces that deal with textual content, picture, audio, and video processing require stream processing architectures for real-time knowledge dealing with and cross-modal transformation capabilities, enabling computerized conversion between modalities.

AI brokers are assuming essential infrastructure roles, with autonomous optimization brokers mechanically tuning system efficiency, self-healing infrastructure detecting and correcting errors, and predictive upkeep brokers stopping failures earlier than they happen.

Over the following 2-3 years, orchestration engines should resolve semantic interoperability throughout proliferating AI fashions and suppliers, obtain real-time efficiency at scale with constant sub-100ms latency, and implement privacy-preserving computation for delicate knowledge processing.

Aggressive Positioning Insights

Success lies not in eliminating complexity, however in architecting methods that scale from easy workflows to advanced enterprise orchestration with out forcing customers to decide on between energy and accessibility. Aggressive benefit will belong to platforms mastering the technical balancing act of hiding complexity whereas preserving developer management by escape hatches, extensibility, and programmatic entry.

The platforms dominating the following AI adoption part will resolve the basic engineering problem: making AI accessible to non-technical customers whereas offering the technical depth builders require.