Information engineering is not about constructing pipelines that comply with directions. It’s about constructing techniques that assume, adapt, and repair themselves. The standard mannequin of static workflows, guide monitoring, and reactive debugging is breaking below the strain of recent knowledge scale and velocity.

Agentic knowledge pipelines change that utterly. They exchange inflexible processes with autonomous techniques powered by AI brokers that may observe, cause, act, and study in actual time. As an alternative of ready for engineers to intervene, these pipelines make selections on their very own, deal with failures as they occur, and constantly enhance from expertise.

This shift isn’t theoretical. It’s already redefining how knowledge platforms are constructed and operated in 2026. On this weblog, we break down how agentic pipelines work, what makes them totally different, and the way groups can begin adopting them with out pointless danger.

What Are Agentic Information Pipelines?

Conventional knowledge pipelines comply with fastened directions. Engineers outline workflows, schedule jobs, and repair failures manually. Agentic pipelines take away that rigidity. They’re AI-driven techniques that may cause, plan, act, and study with out fixed human enter. In 2026, that is not experimental. Most new knowledge infrastructure is being created and managed by AI brokers, not people.

The Six Layers of an Agentic Pipeline: How Intelligence Is Constructed Into Information Programs

1. Intent Layer

The intent layer defines the aim of the pipeline as a substitute of simply the steps. It captures enterprise targets, knowledge shoppers, and expectations round freshness, accuracy, and reliability. This enables the system to prioritize selections dynamically based mostly on outcomes, not directions. With out intent, the pipeline can not adapt and easily executes blindly.

2. Observability Layer

The observability layer offers steady visibility into pipeline well being, knowledge high quality, and system efficiency. It tracks metrics like failures, schema drift, anomalies, and SLA breaches in actual time. These indicators act as the muse for decision-making. With out sturdy observability, the system lacks consciousness and can’t reply successfully.

3. Reasoning Engine

The reasoning engine is the decision-making core that interprets indicators and determines the suitable plan of action. It performs root trigger evaluation, evaluates potential fixes, and selects the perfect response based mostly on context. This eliminates generic reactions and replaces them with clever, situation-aware selections. It’s what makes the pipeline autonomous as a substitute of reactive.

4. Motion Layer

The motion layer executes selections straight throughout the system by interacting with orchestration instruments and infrastructure. It will probably restart jobs, scale sources, modify queries, or isolate defective knowledge. This layer ensures that selections usually are not simply theoretical however really applied in manufacturing. Pace and reliability of execution outline its effectiveness.

5. Reminiscence Layer

The reminiscence layer shops previous incidents, selections, and outcomes to enhance future responses. It permits the system to study from recurring points and resolve them sooner over time. As an alternative of re-analyzing each downside, the pipeline builds operational intelligence. This steady studying is what drives long-term effectivity and resilience.

6. Governance Layer

The governance layer enforces insurance policies, controls, and compliance boundaries for all actions. It defines what will be automated, what requires approval, and ensures each determination is logged and traceable. This layer builds belief by balancing autonomy with management. With out governance, the system dangers making unchecked modifications in manufacturing.

AI-Pushed Pipeline Automation Loop: From Detection to Self-Therapeutic

Agentic pipelines function on a steady loop that allows real-time decision-making and self-healing with out human intervention. Every step within the loop performs a definite function in sustaining and enhancing the system.

- Observe

Repeatedly displays system indicators, together with logs, metrics, knowledge high quality, schema modifications, and efficiency indicators. This step ensures the pipeline has full visibility into each knowledge and infrastructure situations in actual time. - Motive

Analyzes the noticed indicators to establish root causes of points. It differentiates between transient errors and deeper systemic issues, then determines the simplest plan of action based mostly on context and intent. - Act

Executes the chosen response straight throughout the system. This might contain retrying jobs, scaling sources, modifying queries, or isolating problematic knowledge to forestall downstream impression. - Bear in mind

Shops the incident, determination, and end result as a part of the system’s reminiscence. This allows sooner and extra correct dealing with of comparable points sooner or later, enhancing efficiency over time.

AI-Powered Self-Therapeutic Pipelines for Information Reliability

Self-healing is the speedy payoff. Engineers at the moment spend a big portion of time figuring out and fixing points. Agentic techniques remove most of that effort.

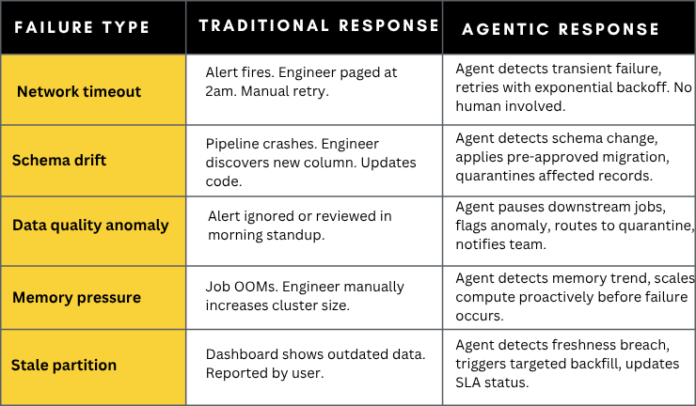

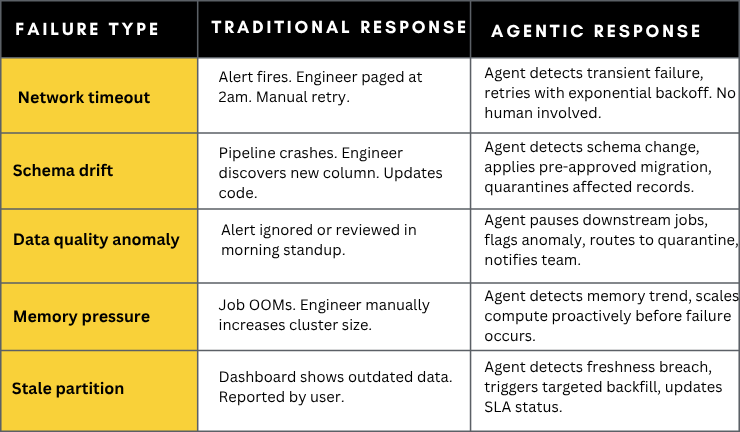

Failure eventualities and autonomous responses

Autonomous Information Pipeline Era: AI-Pushed Pipeline Creation from Intent

Autonomous Pipeline Era

Past self-healing, agentic techniques can generate complete pipeline parts from pure language specs or by analyzing uncooked knowledge patterns. Instruments like Databricks Genie Code (launched March 2026) and Snowflake Cortex Code signify the forefront of this functionality.

Genie Code causes by issues, plans multi-step approaches, writes and validates production-grade code, and maintains the outcome — all whereas preserving people accountable for the choices that matter. On real-world knowledge science duties, it greater than doubled the success price of main coding brokers from 32.1% to 77.1%.

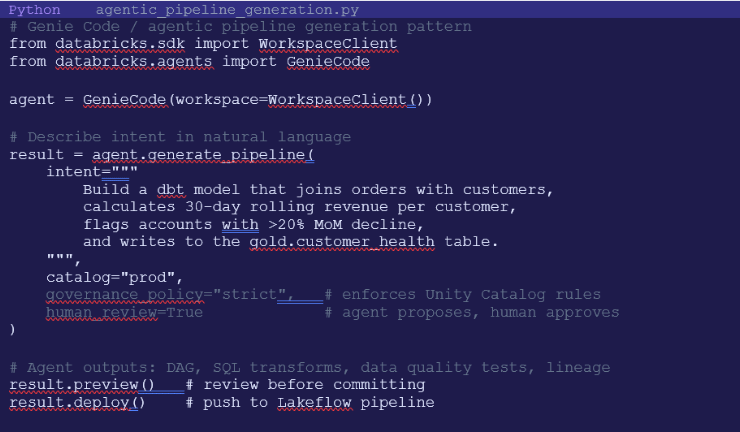

Example: Agent-generated dbt mannequin

Information transformation brokers can analyze uncooked knowledge patterns, counsel and generate dbt fashions and assessments robotically, aligned with organizational finest practices. Here’s what agent-assisted pipeline technology seems like:

Multi-Agent Information Pipeline Orchestration: Coordinating AI Brokers for Scalable, Autonomous Information Engineering

Fashionable agentic pipelines don’t depend on a single AI agent. They function as coordinated techniques of specialised brokers, every liable for a selected operate throughout the knowledge lifecycle. This strategy mirrors how high-performing knowledge groups work, however executes at machine velocity with steady coordination and no handoffs.

On the heart is the orchestrator agent, which acts because the management layer. It assigns duties, manages dependencies, resolves conflicts between brokers, and maintains a world view of pipeline well being. It ensures that each one parts work in sync and that selections align with the pipeline’s intent and governance insurance policies.

Supporting it are domain-specific brokers:

- Ingestion Brokers deal with knowledge consumption from a number of sources. They monitor schema modifications, regulate parsing logic dynamically, and guarantee incoming knowledge stays appropriate with downstream techniques. This reduces breakages brought on by upstream modifications.

- Information High quality Brokers constantly validate knowledge in opposition to outlined requirements. They detect anomalies, implement knowledge contracts, quarantine dangerous data, and set off corrective actions when high quality thresholds are violated. This prevents dangerous knowledge from propagating throughout the pipeline.

- Transformation Brokers generate, optimize, and preserve transformation logic. They construct SQL queries, dbt fashions, and have engineering workflows whereas constantly enhancing efficiency and effectivity based mostly on utilization patterns.

The true complexity lies in coordination. These brokers usually function on overlapping tasks and shared sources. The orchestration layer should handle dependencies, prioritize duties, and resolve conflicts in actual time. For instance, a high quality agent could flag a difficulty whereas a metamorphosis agent is mid-execution. The orchestrator decides whether or not to pause, reroute, or proceed processing based mostly on impression and coverage.

This multi-agent structure allows parallel execution, sooner restoration, and better system resilience. As an alternative of a single level of failure, intelligence is distributed throughout a number of brokers that collaborate constantly. The result’s a knowledge pipeline that’s not simply automated, however coordinated, adaptive, and scalable by design.

Governance, Belief & the Human-in-the-Loop

The most typical objection to agentic pipelines is: how do you belief a system that modifies manufacturing databases with out asking permission? The reply is Coverage-Primarily based Motion Frameworks – a governance layer that defines precisely what brokers can and can’t do autonomously.

Coverage enforcement ranges:

- Notify solely – agent identifies subject, logs it, and alerts a human. No autonomous motion taken.

- Recommend – agent proposes a selected remediation with reasoning. Human opinions and approves earlier than execution.

- Auto-approve low-risk – agent autonomously executes pre-approved actions (retries, minor schema fixes). Logs all actions.

- Full autonomy with audit – agent acts freely inside outlined coverage boundaries. Each motion logged with reasoning traces.

Most organizations begin at ‘notify solely’ and progressively unlock increased autonomy as belief within the system is established. This graduated strategy is crucial – it permits groups to validate the agent’s logic in shadow mode earlier than granting write entry to manufacturing techniques.

As agentic working fashions mature, knowledge engineers shift from hand-coding transformations to supervising autonomous techniques. Meaning designing guardrails, reviewing agent selections, and resolving novel edge circumstances. Explainability turns into core to the mannequin: reasoning traces, auditable logs, and human-in-the-loop checkpoints are required for belief and compliance.

AI-Powered Information Engineering Instruments, Roles, and Impression

Agentic Information Platforms

Instruments included: Databricks Genie Code, Snowflake Cortex Code

These platforms deal with end-to-end pipeline technology, optimization, and deployment. They translate enterprise intent into production-ready workflows utilizing AI. The impression is quicker growth cycles, lowered guide coding, and better consistency in pipeline design.

Pipeline Orchestration Instruments

Instruments included: Apache Airflow, Dagster, Prefect

These instruments handle scheduling, dependencies, and execution of knowledge workflows. In agentic techniques, they act as execution backbones the place AI brokers set off reruns, regulate workflows, and optimize operations in actual time. Their function is crucial for stability and managed execution.

Self-Therapeutic and Observability Instruments

Instruments included: Acceldata ADM, Monte Carlo, OpenTelemetry

These instruments present deep visibility into pipeline well being, knowledge high quality, and system efficiency. They permit anomaly detection and help automated remediation by agentic decision-making. The impression is lowered downtime and elimination of guide debugging.

Information Transformation and AI Modeling Instruments

Instruments included: dbt with AI brokers, Spark with LLMs

These instruments automate the creation and optimization of information transformations. They generate SQL fashions, implement knowledge assessments, and enhance efficiency based mostly on utilization patterns. This reduces engineering effort whereas enhancing knowledge reliability and scalability.

Information Governance and Lineage Instruments

Instruments included: Unity Catalog, Apache Atlas, OpenLineage

These techniques implement entry controls, preserve lineage, and guarantee compliance. They outline what actions brokers can take and supply full auditability of each determination. Their impression is belief, transparency, and protected automation in manufacturing environments.

Reminiscence and Context Shops

Instruments included: LanceDB, Chroma, Vector databases

These techniques retailer historic context, previous incidents, and determination outcomes. They permit AI brokers to study from earlier eventualities and enhance over time. The result’s sooner decision of recurring points and steady system optimization.

Agentic Information Pipeline Implementation Roadmap

Step 1: Begin with AI-Assisted Pipeline Growth

Undertake AI coding instruments like GitHub Copilot, Databricks Genie Code, or Snowflake Cortex Code to speed up pipeline creation. This delivers speedy productiveness features with out altering present structure. It’s the lowest-risk entry level into agentic techniques.

Step 2: Implement Automated Information High quality Monitoring

Deploy ML-based knowledge high quality and anomaly detection instruments to interchange static guidelines. This improves accuracy in detecting points and considerably reduces alert fatigue. It builds the muse for clever decision-making.

Step 3: Deploy Self-Therapeutic Brokers in Shadow Mode

Introduce agentic techniques in “counsel solely” mode the place they suggest fixes however don’t execute them. Monitor their selections over a number of weeks to validate accuracy and construct belief. This step ensures protected analysis earlier than automation.

Step 4: Outline Governance and Coverage Frameworks

Set up clear guidelines for what actions will be automated and what requires human approval. Begin with strict controls and steadily enable low-danger autonomous actions. Governance is crucial to make sure protected and compliant operations.

Step 5: Allow the Autonomous Pipeline Loop

Activate the total observe-reason-act-remember loop with managed autonomy. Permit brokers to execute accepted actions, study from outcomes, and constantly enhance. Conduct common audits to make sure selections stay aligned with enterprise intent and insurance policies.

How ISHIR Helps You Construct Agentic Information Pipelines

ISHIR helps organizations transition from conventional knowledge pipelines to agentic, AI-driven techniques by combining Agentic AI growth with deep knowledge engineering experience. We design and construct clever brokers, modernize pipeline architectures, and combine observability, orchestration, and self-healing capabilities to create scalable, autonomous knowledge platforms aligned with enterprise outcomes.

Past implementation, ISHIR allows actual enterprise impression by superior knowledge analytics and hands-on Information + AI workshops. We assist groups unlock actionable insights, outline clear adoption roadmaps, and construct inside functionality to handle and scale agentic techniques with confidence and management.

Fighting fragile knowledge pipelines, fixed failures, and guide fixes slowing your workforce down?

ISHIR helps you construct AI-powered, self-healing knowledge pipelines that automate operations and scale with confidence.

FAQs on Agentic Information Pipelines and AI-Pushed Information Engineering

Q. What’s an agentic knowledge pipeline and the way is it totally different from conventional pipelines?

An agentic knowledge pipeline is an AI-driven system that may observe, cause, act, and study with out fixed human intervention. In contrast to conventional pipelines that comply with fastened workflows, agentic pipelines adapt dynamically to modifications in knowledge, schema, and system situations. They don’t simply execute duties, they make selections based mostly on context and intent. This shift reduces guide debugging, improves reliability, and allows real-time optimization. It’s a transfer from static automation to clever autonomy.

Q. How do AI brokers really enhance knowledge pipeline reliability?

AI brokers enhance reliability by constantly monitoring system well being and knowledge high quality, then taking corrective motion immediately. As an alternative of ready for alerts and guide fixes, they establish root causes and resolve points equivalent to failures, anomalies, or schema modifications in actual time. In addition they study from previous incidents, which suggests recurring issues are dealt with sooner and extra precisely. This considerably reduces downtime, knowledge inconsistencies, and operational overhead.

Q. Are agentic knowledge pipelines protected to make use of in manufacturing environments?

Sure, however solely when applied with sturdy governance frameworks. Most organizations begin with restricted autonomy the place brokers counsel actions as a substitute of executing them. Over time, low-risk actions like retries or scaling are automated, whereas crucial modifications nonetheless require approval. Each motion is logged, traceable, and aligned with coverage guidelines. This managed strategy ensures security, compliance, and belief whereas steadily rising automation.

Q. What are the primary challenges in adopting agentic pipelines?

The largest challenges are belief, governance, and system integration. Groups usually hesitate to permit AI techniques to switch manufacturing knowledge with out oversight. There may be additionally complexity in integrating AI brokers with present orchestration, monitoring, and knowledge techniques. One other problem is defining clear intent and insurance policies so brokers could make appropriate selections. Profitable adoption requires a phased strategy with validation, monitoring, and gradual rollout.

Q. Do agentic pipelines exchange knowledge engineers?

No, they alter the function of information engineers fairly than changing them. Engineers transfer from writing and fixing pipelines to designing techniques, defining insurance policies, and supervising AI brokers. They focus extra on structure, governance, and optimization as a substitute of repetitive operational duties. This shift will increase productiveness and permits groups to deal with bigger, extra complicated knowledge environments with fewer sources.

Q. What instruments are generally used to construct AI-driven knowledge pipelines?

The ecosystem consists of agentic platforms like Databricks Genie Code and Snowflake Cortex, orchestration instruments like Airflow and Dagster, and observability instruments like Monte Carlo and OpenTelemetry. Transformation instruments equivalent to dbt mixed with AI brokers automate modeling and SQL technology. Governance instruments guarantee compliance, whereas vector databases retailer reminiscence for studying. These instruments work collectively to allow clever, autonomous pipeline conduct.

Q. How can organizations begin implementing agentic knowledge pipelines at the moment?

The very best strategy is to start out small and construct progressively. Start with AI-assisted growth to hurry up pipeline creation, then implement automated knowledge high quality monitoring. Introduce agentic techniques in a suggestion mode to validate their selections earlier than enabling automation. Outline governance insurance policies early to manage danger. As soon as belief is established, steadily activate full autonomy with steady monitoring and audits. This phased technique ensures protected and efficient adoption.