You say two phrases right into a microphone: “autumn forest.” A couple of seconds later, the colours seem, in your precise face, projected there in actual time, shifting throughout your cheeks and eyelids and lips when you test your self within the mirror. No smearing, no testing, no scroll by a grid of swatches. Simply the heat of October mild translated, someway, into blush and shadow.

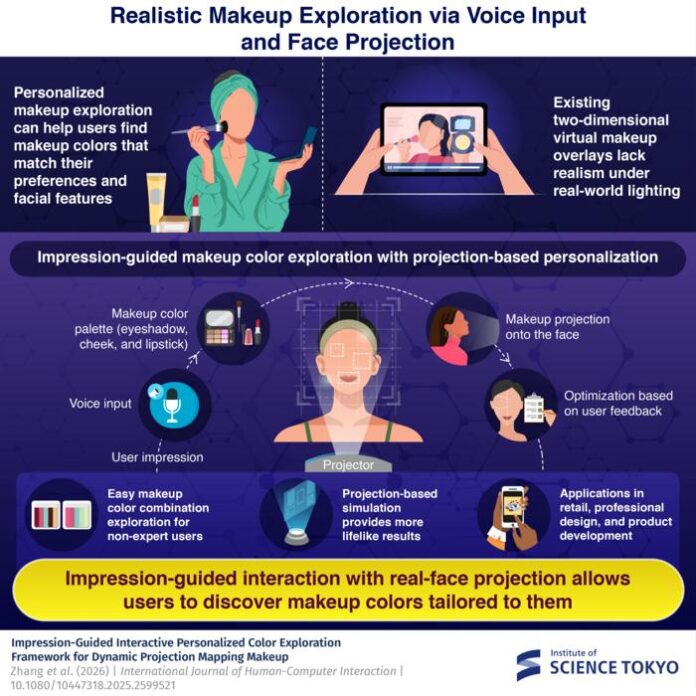

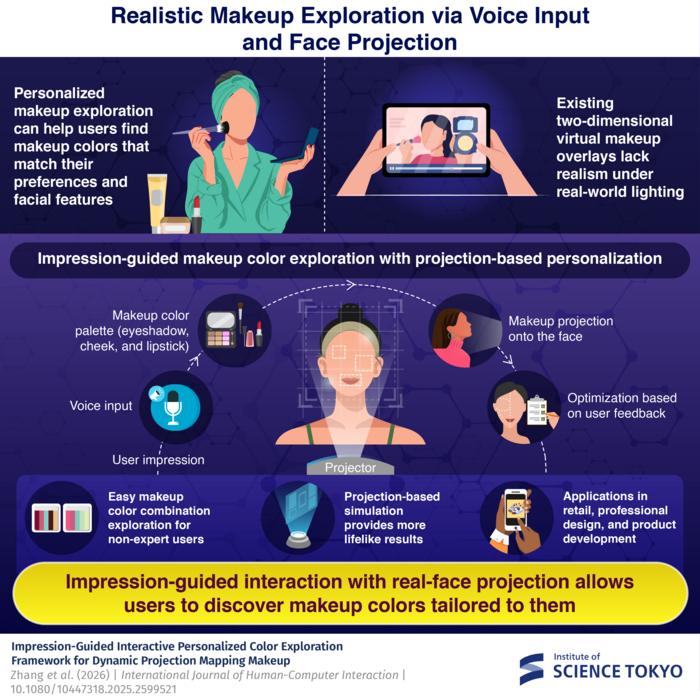

The system doing this was developed by researchers on the Institute of Science Tokyo, and it represents one thing of a rethink of how digital make-up expertise would possibly really work. Most apps on this house, the type that allow you to swipe by shades earlier than shopping for, overlay colours onto a two-dimensional picture of your face on a telephone display. Handy, sure, however the impact is a bit like portray onto {a photograph}: what you see shouldn’t be fairly how it will look on actual pores and skin, underneath actual mild.

The Tokyo workforce’s method is totally different in a reasonably elementary method. Fairly than projecting make-up onto a display, they undertaking it immediately onto the person’s face utilizing dynamic projection mapping, a method that tracks facial options in actual time at 1,000 frames per second and adjusts the projected picture to match actions, tilts, small rotations of the pinnacle. The result’s that the colours land on precise pores and skin, with precise pores and skin tone and texture doing the factor that pores and skin tone and texture at all times does to paint: reworking it, absorbing it, reflecting it again in a different way than anticipated.

That final level seems to matter rather a lot. Anybody who has ever purchased a lipstick that regarded one shade within the retailer and one other completely on their face will perceive the issue. Totally different pores and skin tones work together with pigments in ways in which no flat-screen overlay can reliably simulate. A projected crimson on pale pores and skin reads in a different way than the identical projection on a deeper complexion, not as a result of the colour adjustments, however as a result of the floor absorbs and displays in a different way. The Science Tokyo system handles this by constructing the interplay into the exploration course of itself: you see what the colour really seems like in your face, and the system learns from what you select.

The place to begin is language. Customers are inspired to assume in impressions quite than specs, describing scenes or moods in pure phrases: “sakura in spring,” “night time rose,” “mild sunshine.” The system feeds this textual content by a generative picture mannequin, which produces a photorealistic scene that represents the described ambiance, then extracts 5 dominant colours from that scene. These colours are then filtered by a dataset of 313 actual beauty product colours collected from main worldwide manufacturers, guaranteeing that what will get projected is one thing you possibly can really purchase, roughly talking, quite than a blue-green that no lipstick has ever approached.

“Customers can simply discover most well-liked make-up colours from a lot of combos by interactive optimization utilizing impression phrases and projection-based make-up,” says Yoshihiro Watanabe, affiliate professor on the Institute of Science Tokyo. “This can assist non-expert customers effectively discover satisfying leads to the huge house of shade combos.”

The “huge house” shouldn’t be an exaggeration. For 3 make-up elements (eyeshadow, blush, and lipstick) the attainable combos inside an ordinary shade house kind what the researchers describe as a nine-dimensional search downside. For somebody who doesn’t assume in hue, saturation, and lightness parameters, that’s about as navigable because it sounds. The impression-based entry level sidesteps this by collapsing an unlimited search house right into a handful of projected choices, which the person can then refine utilizing keyboard inputs whereas trying within the mirror.

The refinement step makes use of Bayesian optimization, a method borrowed from fields like lighting design and melody composition, to be taught preferences iteratively. After every spherical of choices, the algorithm narrows in on what appears to resonate, balancing exploration of recent prospects in opposition to exploitation of identified preferences. In person research with 15 individuals, the impression-guided system persistently outperformed guide color-slider adjustment on satisfaction, ease of use, and what the researchers measured as “stimulation” and “originality.” Maybe extra apparently, a number of individuals reported discovering shade combos they’d by no means have chosen on their very own, not as a result of the system discovered one thing higher by the researchers’ reckoning, however as a result of the freeform impressionistic enter led someplace the individual hadn’t thought to go.

There are actual limitations to the present setup, and the researchers are upfront about them. The high-speed projector and digicam required for real-time facial monitoring are costly; this isn’t but shopper {hardware}. Some individuals felt that when they’d narrowed in on an general mixture they preferred, they wished to regulate a single element independently, which the system doesn’t simply permit. And sure prompts, notably extra summary impressionistic ones like “mild sunshine,” proved genuinely tough to translate into constant shade selections, as a result of individuals affiliate the identical atmospheric description with fairly totally different palettes.

“With such a system, customers can merely describe their desired impression of make-up colours in pure language and observe the results within the mirror,” Watanabe says. The hole between that description and what’s really attainable, virtually talking, continues to be appreciable. Cosmetics professionals interviewed for the analysis famous the system’s potential for early-stage product improvement, for sketching out shade ranges from summary design briefs, but in addition flagged the accuracy hole between its present output and what skilled workflows require.

What makes this greater than a intelligent interface experiment is what it says about personalization in an period when advice programs and digital try-on instruments are proliferating quickly. The issue with most of those instruments is that they advocate from current classes, suggesting choices based mostly on tendencies or facial characteristic evaluation, quite than following an open-ended inventive impulse wherever it leads. An impression-guided system makes room for one thing totally different: the serendipitous discover, the colour mixture that solely emerges as a result of the place to begin was “October mild in a forest” quite than “heat auburn tones.” The researchers counsel the method might prolong past shade, ultimately incorporating make-up textures, shapes, even different sensory inputs like music or motion, as methods of translating ambiance into look.

Whether or not the expertise ever reaches a value level that makes it viable outdoors analysis labs or high-end retail settings stays to be seen. However the query it poses is the extra sturdy one: how a lot of what we wish from magnificence expertise is accuracy, and the way a lot is the flexibility to comply with a sense someplace we couldn’t have anticipated?

DOI / Supply: https://doi.org/10.1080/10447318.2025.2599521

Often Requested Questions

Projected mild interacts with no matter floor it hits, and pores and skin shouldn’t be a impartial canvas. Totally different pores and skin tones and undertones take in and replicate mild in a different way, which implies the identical projected shade can seem hotter, cooler, lighter, or deeper on totally different individuals. That is exactly the issue the Tokyo system is designed to deal with: by projecting onto your precise face and having you consider what you see in a mirror quite than on a display, the suggestions loop accounts to your explicit pores and skin in a method that digital overlays can’t.

Normal digital try-on instruments overlay a shade impact onto a two-dimensional picture of your face on a telephone display, and whereas they’ve improved significantly, they continue to be screen-based simulations. The Tokyo system initiatives mild immediately onto the person’s bodily face, which implies the colour is responding to actual pores and skin texture and tone in actual time. The impression-based enter can be new: quite than deciding on from a list, you describe a temper or scene and the system generates choices from that.

That was partly the purpose. Person research discovered the system was notably efficient for individuals with little make-up expertise, who usually discover each bodily try-on and digital swatch-browsing overwhelming. The impression enter sidesteps the necessity to already know shade concept or have a choice vocabulary, letting customers navigate through ambiance and feeling quite than technical parameters.

Primarily price and infrastructure. The system requires a high-speed projector able to 1,000 frames per second, a high-speed digicam, and a managed lighting surroundings, none of that are low cost or compact. The researchers acknowledge the {hardware} is presently research-grade and that growing consumer-ready projection units at accessible value factors is likely one of the major challenges for wider adoption.

There’s No Paywall Right here, However We Rely On You!

If our reporting has knowledgeable or impressed you, please think about making a donation. Each contribution, irrespective of the dimensions, empowers us to proceed delivering correct, partaking, and reliable science and medical information. Thanks for standing with us!

Key Takeaways

- The Tokyo system initiatives digital make-up immediately onto customers’ faces in actual time, adapting to pores and skin tone and texture.

- Customers enter pure language impressions like ‘autumn forest’ to find colours, which the system generates and filters based mostly on actual merchandise.

- This impression-guided system simplifies the complicated choices in make-up, making it simpler for non-experts to seek out appropriate colours and combos.

- Although promising, the expertise requires costly tools and isn’t but viable for basic shopper use.

- The method might redefine how make-up expertise addresses private preferences past simply accuracy.

Associated