When OpenAI introduced its persistent reminiscence function for ChatGPT in early 2025, it was introduced as a comfort. Customers may now have the mannequin keep in mind prior context, preferences, and information, making interactions smoother and extra private. On the floor, it was a function replace. However at a deeper stage, it hinted at a shift that mirrors essentially the most highly effective transitions within the historical past of computing: the migration of management from execution to understanding.

The Historic Migration of Strategic Worth

Each main technological period redefines the place worth resides. Within the age of the non-public laptop, it was the working system—the layer that mediated between {hardware} and software. Within the web period, the worth migrated to the browser and the search index, mediating scarce consideration. Within the smartphone period, the app retailer grew to become the worth keeper, mediating distribution. Within the cloud period, infrastructure took its flip, abstracting {hardware} into companies and mediating computation.

Every of those shifts shared a standard sample: worth flowed towards the layer that mediated the scarce useful resource of the age. The identical sample is now unfolding in AI. The scarce useful resource shouldn’t be compute or information, however context, the dwell understanding of how information, entities, relationships, and permissions come collectively in a given second to make reasoning related.

The Context Cloth: The New System of File

The ability of the cloud was that it abstracted infrastructure. The ability of AI is that it abstracts reasoning itself. What used to require procedural code can now be expressed probabilistically by prompts and retrieval. However abstraction at all times introduces a brand new dependency; no matter layer gives comfort turns into the brand new level of management.

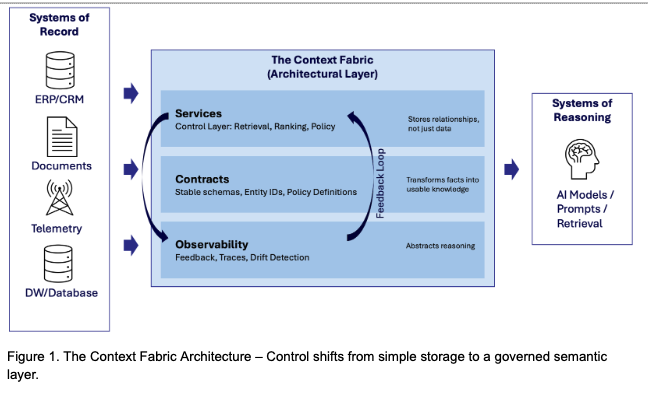

For AI, this management lives in how context is assembled, saved and retrieved. A mannequin with out context is sort of a processor with out reminiscence; it could compute, but it surely can’t purpose in regards to the world. Each enterprise severe about AI will finally construct what is perhaps referred to as a context material. That is an architectural layer that connects methods of file (CRM, ERP, tickets, paperwork, telemetry, and so on.) to methods of reasoning.

This material is a brand new system of file. It shops not information itself, however the relationships that give information that means, reworking information into usable information. The material’s stability is dependent upon:

- Companies: The management layer dealing with retrieval, rating, and coverage.

- Contracts: The secure schemas, entity identifiers, and coverage definitions that hold that means constant over time.

- Observability: Suggestions, traces, and drift detection to observe the system’s efficiency.

Past mere retrieval, the context material permits a essential suggestions loop. As observability companies throughout the material screens mannequin responses (utilizing suggestions, traces, and drift detection), they establish reasoning gaps. This wealthy, validated context can then be used to repair these gaps, resulting in a virtuous cycle. Context results in higher reasoning, which continuously refines the material construction. This ensures the context material is a constantly self-improving cumulative asset.

It’s important to not confuse the context material with Retrieval-Augmented Era (RAG). RAG is the strategy of fetching information to reply a question. The context material is the ruled system of file that ensures what you fetch is true, safe, and constant throughout the enterprise.

One other vital level to notice is that, as the material turns into the mind of the enterprise, safety shifts from defending firewalls to making sure context integrity. If a nasty actor creates “context poisoning” (injecting false insurance policies or corrupted paperwork), the AI will confidently hallucinate or leak secrets and techniques. Observability and drift detection develop into the brand new cybersecurity frontier.

The Financial Crucial: Why Context is a Moat

For the enterprise CIO, the shift to context isn’t simply an architectural element, however it’s the main financial lever of the AI period. Constructing a context material is an upfront funding, but it surely creates a persistent financial benefit—a “moat”—by basically altering the associated fee construction of intelligence. This shift is visualized by the context cache curve (see Determine 2 beneath).

Simply as early cloud computing created information gravity, AI is creating context gravity, which is the tendency for intelligence to pay attention the place the richest, cleanest, most coherent context resides.

What makes the material strategically vital is that it compounds effectivity over time. In AI methods, the important thing financial variable is context reuse, which is how usually current embeddings, options, and retrievals might be leveraged with out re-computation. This reuse defines the form of the associated fee curve.

A system with a excessive cache hit charge runs dramatically cheaper and sooner than one which should repeatedly re-index or re-query. This creates an enormous financial moat:

- Mounted vs. Marginal Value: Constructing a context material is a set price. As soon as established, a excessive cache hit charge on the material (the correct aspect of Determine 2) means subsequent reasoning duties have a near-zero marginal price, making intelligence cumulative.

- Financial Moat: Rivals caught in a fragmented state (low reuse, left aspect of the curve) incur prices which can be a number of occasions greater for a similar reasoning process. This effectivity can also be foundational to the subsequent layer of the stack: agentic AI, which requires dependable, low-latency context to maneuver from a reactive software to a proactive collaborator.

- The Open-Supply Paradox: The rise of open-source fashions (like Llama or Mistral) strengthens the context benefit. If the mannequin is a commodity, your complete worth proposition shifts to the proprietary context. The context material permits an enterprise to swap fashions (portability) with out dropping the “reminiscence” of the enterprise.

The maturity journey

Most enterprises is not going to begin with a context material. They are going to start, as they did with cloud, in fragmentation. Groups will construct remoted retrieval pipelines, creating “sprawl”. The journey to a real platform follows a predictable maturity mannequin:

- Sprawl: Remoted experiments and fragmented retrieval logic.

- Unification: Standardization begins, requiring frequent identifiers and shared ontologies to attain interoperability.

- Platformization: The context material is established as a real platform, serving a number of domains with retrieval and coverage as shared companies.

- Portability: The material turns into transportable, able to operating throughout completely different mannequin suppliers and clouds with out dropping that means.

The Organizational Barrier: Preventing Conway’s Legislation

Essentially the most vital barrier to this journey shouldn’t be technical, however organizational. Conway’s Legislation means that methods inevitably mirror the communication constructions of the organizations that construct them. A siloed group will naturally produce a “sprawl” of disconnected context pipelines.

True “context gravity” requires the group to battle this inertia. Reaching a unified material forces a confrontation: distinct departments should agree on shared definitions of fact. The winners of the AI period would be the organizations able to re-wiring their human communication constructions to match AI’s want for unified context.

Seizing the Context Benefit: Implications for the CIO

Context possession is the ultimate frontier. Cloud infrastructure made computing elastic. Context infrastructure will make intelligence cumulative.

Whereas the infrastructure layer drives the cloud world, context goes to drive the AI world, making the shift from infrastructure to semantics. The winners can be those that know easy methods to navigate and construct the context material:

- The Enterprise CIO: In contrast to the cloud period, the place worth migrated to exterior hyperscalers, the context material, constructed on proprietary methods of file, provides the CIO a uncommon likelihood to reclaim strategic worth of their very own intelligence layer.

- Specialised Vertical Suppliers: The primary to construct a strong, ruled material for a selected regulated vertical (e.g., authorized discovery, precision manufacturing, healthcare, and so on.) will seize almost all the worth. Their pre-grounded schemas and coverage contracts develop into an impenetrable barrier to entry attributable to context gravity.

- The Metadata Layer: The brand new middleware suppliers will supply an abstraction layer that handles contracts, shared information codecs, entity IDs, and coverage guidelines. These suppliers will set the usual for the way methods ought to work collectively, guaranteeing interoperability rules and ensuring every part stays constant.