Syncfusion Code Studio now out there

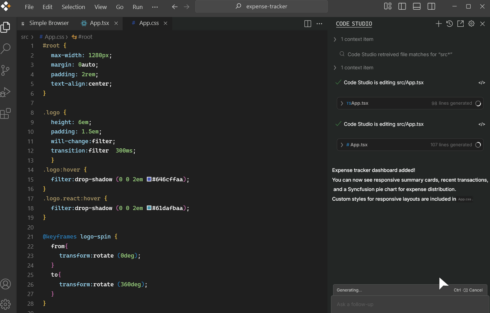

Code Studio is an AI-powered IDE that provides capabilities like autocompletion, code era and explanations, refactoring of chosen code blocks, and multistep agent automation for large-scale duties.

Prospects can use their most well-liked LLM to energy Code Studio, and also will get entry to safety and governance options like SSO, role-based entry controls, and utilization analytics.

“Each know-how chief is in search of a accountable path to scale with AI,” stated Daniel Jebaraj, CEO of Syncfusion. “With Code Studio, we’re serving to enterprise groups harness AI on their very own phrases, sustaining a stability of productiveness, transparency, and management in a single surroundings.”

Linkerd to get MCP assist

Buoyant, the corporate behind Linkerd, introduced its plans so as to add MCP assist to the mission, which can allow customers to get extra visibility into their MCP visitors, together with metrics on useful resource, software, and immediate utilization, corresponding to failure charges, latency, and quantity of information transmitted.

Moreover, Linkerd’s zero-trust framework can be utilized to use fine-grained authorization insurance policies for MCP calls, permitting corporations to limit entry to particular instruments or assets primarily based on the identification of the agent.

OpenAI begins creating new benchmarks that extra precisely consider AI fashions throughout totally different languages and cultures

English is barely spoken by about 20% of the world’s inhabitants, but present AI benchmarks for multilingual fashions are falling brief. For instance, MMMLU has turn into saturated to the purpose that high fashions are clustering close to excessive scores, and OpenAI says this makes them a poor indicator of actual progress.

Moreover, the prevailing multilingual benchmarks give attention to translation and a number of selection duties and don’t essentially precisely measure how nicely the mannequin understands regional context, tradition, and historical past, OpenAI defined.

To treatment these points, OpenAI is constructing new benchmarks for various languages and areas of the world, beginning with India, its second largest market. The brand new benchmark, IndQA, will “consider how nicely AI fashions perceive and purpose about questions that matter in Indian languages, throughout a variety of cultural domains.”

There are 22 official languages in India, seven of that are spoken by no less than 50 million folks. IndQA contains 2,278 questions throughout 12 totally different languages and 10 cultural domains, and was created with assist from 261 area specialists from the nation, together with journalists, linguists, students, artists, and business practitioners.

SnapLogic introduces new capabilities for brokers and AI governance

Agent Snap is a brand new execution engine that enables for observable agent execution. The corporate in contrast it to onboarding a brand new worker and coaching and observing them earlier than giving them larger duty.

Moreover, its new Agent Governance framework permits groups to make sure that brokers are safely deployed, monitored, and compliant, and supplies visibility into knowledge provenance and utilization.

“By combining agent creation, governance, and open interoperability with enterprise-grade resiliency and AI-ready knowledge infrastructure, SnapLogic empowers organizations to maneuver confidently into the agentic period, connecting people, programs, and AI into one clever, safe, and scalable digital workforce,” the corporate wrote in a publish.

Sauce Labs broadcasts new knowledge and analytics capabilities

Sauce AI for Insights permits improvement groups to show their testing knowledge into insights on builds, units, and take a look at efficiency, right down to a user-by-user foundation. Its AI agent will tailor its responses primarily based on who’s asking the query, corresponding to a developer getting root trigger evaluation information whereas a QA supervisor will get release-readiness insights.

Every response comes with dynamically generated charts, knowledge tables, and hyperlinks to related take a look at artifacts, in addition to clear attribution as to how knowledge was gathered and processed.

“What excites me most isn’t that we constructed AI brokers for testing—it’s that we’ve democratized high quality intelligence throughout each degree of the group,” stated Shubha Govil, chief product officer at Sauce Labs. “For the primary time, everybody from executives to junior builders can now take part in high quality conversations that after required specialised experience.”

Google Cloud’s Ironwood TPUs will quickly be out there

The brand new Tensor Processing Items (TPUs) will probably be out there within the subsequent few weeks. They had been designed particularly for dealing with demanding workloads like large-scale mannequin coaching or high-volume, low-latency AI latency and mannequin serving.

Ironwood TPUs can scale as much as 9,216 chips in a single unit with Inter-Chip Interconnect (ICI) networking at 9.6 Tb/s.

The corporate additionally introduced a preview for brand spanking new cases of the digital machine Axion, N4A, in addition to C4A, which is an Arm-based naked steel occasion.

“In the end, whether or not you utilize Ironwood and Axion collectively or combine and match them with the opposite compute choices out there on AI Hypercomputer, this system-level method provides you the final word flexibility and functionality for probably the most demanding workloads,” the corporate wrote in a weblog publish.

DefectDojo broadcasts safety agent

DefectDojo Sensei acts like a safety guide, and is ready to reply questions on cybersecurity packages managed by DefectDojo.

Key capabilities embody evolution algorithms for self-improvement, era of software suggestions for safety points, evaluation of present instruments, creation of customer-specific KPIs, and summaries of key findings.

It’s at present in alpha, and is anticipated to turn into usually out there by the top of the yr, the corporate says.

Testlio expands its crowdsourced testing platform to offer human-in-the-loop testing for AI options

Testlio, an organization that provides crowdsourced software program testing, has introduced a brand new end-to-end testing resolution designed particularly for testing AI options.

Leveraging Testlio’s group of over 80,000 testers, this new resolution supplies human-in-the-loop validation for every stage of AI improvement.

“Belief, high quality, and reliability of AI-powered functions depend on each know-how and other people,” stated Summer time Weisberg, COO and Interim CEO at Testlio. “Our managed service platform, mixed with the dimensions and experience of the Testlio Neighborhood, brings human intelligence and automation collectively so organizations can speed up AI innovation with out sacrificing high quality or security.”

Kong’s Insomnia 12 launch provides capabilities to assist with MCP server improvement

The newest launch of Insomnia goals to convey MCP builders a test-iterate-debug workflow for AI improvement to allow them to rapidly develop and validate their work on MCP servers.

Builders will now be capable to join on to their MCP servers, manually invoke instruments with customized parameters, examine protocol-level and authentication messages, and see responses.

Insomnia 12 additionally provides assist for producing mock servers from OpenAPI spec paperwork, JSON samples, or a URL. “What used to require hours of handbook arrange, like defining endpoints or crafting sensible responses, now occurs nearly instantaneously with AI. Mock servers can now remodel from a ‘good to have when you’ve got the time to set them up’ into an important a part of a developer’s workflow, permitting you to check quicker with out handbook overhead,” Kong wrote in a weblog publish.

OpenAI and AWS announce $38 billion deal for compute infrastructure

AWS and OpenAI introduced a brand new partnership that may have OpenAI’s workloads working on AWS’s infrastructure.

AWS will construct compute infrastructure for OpenAI that’s optimized for AI processing effectivity and efficiency. Particularly, the corporate will cluster NVIDIA GPUs (GB200s and GB300s) on Amazon EC2 UltraServers.

OpenAI will commit $38 billion to Amazon over the course of the subsequent a number of years, and OpenAI will instantly start utilizing AWS infrastructure, with full capability anticipated by the top of 2026 and the flexibility to scale as wanted past that.