Here’s a state of affairs that performs out continually in enterprise software program groups. A product supervisor asks the corporate’s AI assistant: “Who’re our high clients this quarter?” The system returns a clear, ranked checklist. It seems proper. Everybody strikes on.

Besides the product group defines “high” by engagement. Finance defines it by internet income. Gross sales defines it by deal dimension. The AI picked one interpretation, offered it with full confidence, and no one observed till a technique choice acquired made based mostly on numbers that meant one thing completely different to each individual within the room.

This isn’t hallucination in the way in which individuals normally speak about it. The system didn’t make something up. It simply made a selection about which means that was by no means its option to make.

The Actual Downside Isn’t the Mannequin

There’s a widespread assumption in enterprise AI adoption that if you happen to choose the fitting mannequin, tune it rigorously, and feed it good information, you’ll get dependable outputs. That assumption misses the precise failure mode.

LLMs are terribly good at language. They don’t seem to be good at organizational which means. Ask your AI what your churn charge is, and watch what occurs. The mannequin doesn’t know whether or not you measure churn on the subscription degree or the client degree. It doesn’t know whether or not you rely downgrades or ignore them. It doesn’t know if enterprise accounts with a number of seats are dealt with in a different way. These are usually not solutions buried in a doc someplace. They’re organizational choices that dwell in tribal data, workforce agreements, and information mannequin feedback written two years in the past by somebody who has since left the corporate.

The mannequin will infer. And inference, offered with confidence, is a legal responsibility.

Embeddings Don’t Repair This

The usual response to this drawback is best retrieval. Embed your documentation, pull essentially the most related chunks, give the mannequin extra context. It’s an affordable instinct and a partial enchancment. But it surely doesn’t clear up the underlying problem.

Embeddings measure how shut two items of textual content are in vector area; they are saying nothing about whether or not a given interpretation is definitely right to your group. “Income” and “revenue” are neighbors in embedding area as a result of they seem collectively continually in monetary writing. In your monetary reporting system, conflating them is a severe error. No quantity of retrieval resolves that as a result of the proper reply isn’t in any doc. It’s in a choice your finance workforce made about how you can outline issues, in all probability years in the past, in all probability by no means written down in a type a machine can use.

The identical structural drawback exhibits up all over the place. “Energetic consumer” means one thing completely different to your engineering workforce (an API name) than to your product workforce (a accomplished transaction). “Conversion” means a profitable HTTP request to 1 workforce and a signup-to-paid development to a different. “Engagement” is occasion frequency in a single dashboard and session depth in one other. Retrieval doesn’t resolve definitional ambiguity. It simply retrieves extra textual content that accommodates the anomaly.

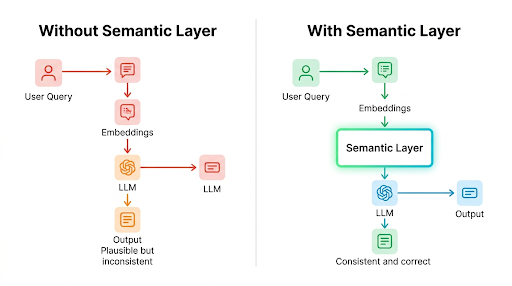

Determine 1: With out a semantic layer, LLM outputs are believable however inconsistent. With one, they’re grounded and proper.

What Truly Must Occur

The reply is a semantic layer, a structured, machine-readable illustration of what your group’s phrases truly imply. Not a glossary. Not higher documentation. A proper encoding of entities, relationships, metrics, and disambiguation guidelines that sits between your information and your AI system, in order that when somebody asks about churn or energetic accounts or high clients, the system isn’t guessing.

This isn’t a brand new concept within the information world. Instruments like dbt and Looker have utilized it to enterprise intelligence for years. What’s new is the strain to increase it into AI pipelines, and the tooling is catching up: the dbt Semantic Layer now helps direct AI pipeline integration, and platforms like Dice are constructing native LLM connections for precisely this goal.

The sensible start line for many groups is a schema-based strategy: YAML or JSON configuration recordsdata, version-controlled in git, injected at inference time. Much less rigorous than formal ontologies, however dramatically extra maintainable, and normally enough. If you have already got a BI semantic layer, your definitional work is essentially carried out. The problem is making it queryable when the AI wants it.

The Tougher Downside Is Organizational

Right here’s what most structure posts omit: the technical implementation is the simple half. Getting three departments to agree on what “energetic” means shouldn’t be. Constructing and sustaining a semantic layer forces conversations that organizations routinely keep away from, and it surfaces disagreements which have been quietly producing inconsistent outcomes for years. That’s uncomfortable. It’s additionally the purpose.

There’s a easy take a look at I take advantage of: if a brand new rent would want to learn inside documentation to grasp what a key enterprise time period means, that time period belongs in a semantic layer, not in a immediate.

The subsequent section of enterprise AI isn’t about which mannequin you employ. It’s about how effectively your group has systematized its personal data for machine consumption. From immediate engineering to context engineering. From information pipelines to which means pipelines. The groups that get this proper will produce AI outputs that aren’t simply fluent; they’ll be right. In enterprise techniques, being fluent shouldn’t be sufficient. In case your AI shouldn’t be definitionally right, it’s operationally unreliable.

As a substitute of asking: “Who’re our high clients?” — Outline it:

TopCustomer = revenue_last_90_days > $50K AND active_subscription = true