Most enterprise AI tasks don’t fail due to weak fashions. They fail as a result of international groups can not execute AI supply at scale.

One crew trains fashions in a single geography. One other handles deployment elsewhere. Governance lives in disconnected spreadsheets. Dash priorities change throughout time zones. Tooling is inconsistent. Possession is fragmented. By the point the AI workload reaches manufacturing, velocity collapses beneath operational chaos. That is the true AI bottleneck no one desires to speak about.

Enterprises are investing hundreds of thousands into AI transformation whereas nonetheless managing supply by means of outdated offshore buildings constructed for conventional software program improvement. That mannequin breaks quick when AI pipelines, MLOps, automation, governance, and real-time decision-making enter the image. AI workloads demand operational alignment, not remoted execution. And that’s precisely why GCC-backed pod fashions have gotten the brand new basis for scalable AI supply. As an alternative of fragmented outsourcing groups working in silos, GCC pods create shared possession, standardized AI workflows, embedded governance, and steady execution alignment throughout offshore and nearshore operations. The outcome isn’t just sooner supply.

It’s predictable AI execution. Higher governance. Cleaner handoffs. Stronger accountability. And AI methods that really make it to manufacturing with out burning by means of time, finances, and management persistence. As a result of at scale, AI success is now not a mannequin downside. It’s an operational governance downside.

Why International AI Groups Battle with Pipeline Governance

Most international AI groups are working on disconnected execution fashions. Offshore AI improvement groups, nearshore engineering items, and in-house stakeholders typically work with completely different priorities, instruments, and supply expectations. The result’s fragmented AI pipeline governance the place mannequin improvement, testing, deployment, and monitoring lose alignment throughout the workflow. AI workloads decelerate not due to technical limitations, however as a result of operational coordination breaks beneath scale.

The second downside is inconsistent AI/ML workflows throughout distributed groups. One crew could comply with strict MLOps governance whereas one other depends on handbook deployment processes and undocumented modifications. This creates model conflicts, poor mannequin reproducibility, delayed dash execution, and unstable manufacturing AI environments. With out standardized AI pipeline governance, enterprises find yourself scaling confusion as an alternative of scaling intelligence.

Time-zone separation makes the issue worse. Offshore and nearshore AI groups typically function with weak shared context, reactive communication, and restricted accountability buildings. Crucial choices get delayed between handoffs. Dash priorities drift. AI deployment points stay unresolved longer than they need to. Over time, the group loses supply predictability, governance visibility, and confidence in its means to operationalize enterprise AI at scale.

What AI Pipeline Governance Really Means

AI pipeline governance will not be about including extra approvals, conferences, or course of layers. It’s about creating execution consistency throughout AI improvement, MLOps workflows, mannequin deployment, information governance, and manufacturing AI operations. When international AI groups work throughout offshore and nearshore environments, governance turns into the system that standardizes how AI workloads are constructed, examined, monitored, secured, and scaled. With no robust AI governance framework, enterprises find yourself with fragmented AI pipelines, inconsistent mannequin habits, deployment failures, and 0 operational visibility.

Efficient AI pipeline governance creates alignment throughout your complete AI lifecycle. That features standardized AI/ML workflows, model management, automated testing, CI/CD for AI fashions, compliance monitoring, dash accountability, and shared supply metrics throughout distributed AI engineering groups. In sensible phrases, governance ensures that each AI initiative follows the identical operational self-discipline no matter geography, crew construction, or time zone. The businesses scaling enterprise AI efficiently usually are not those constructing probably the most fashions. They’re those constructing predictable, ruled, and repeatable AI supply methods.

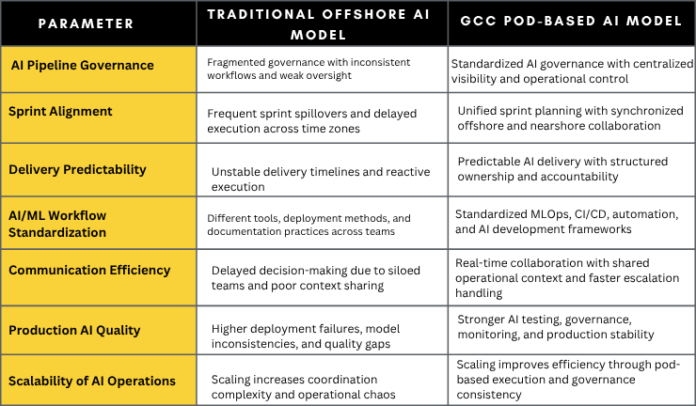

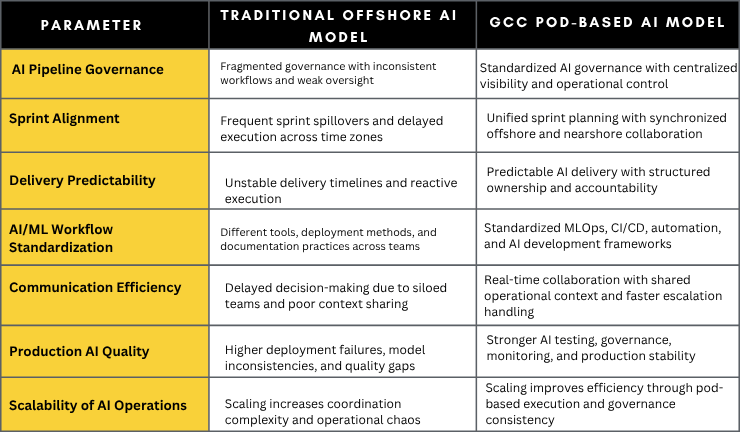

Prime 7 Metrics to Consider Conventional Offshore AI Fashions vs GCC Pod Fashions

How GCC Pods Create Alignment Throughout Offshore and Nearshore AI Groups

Standardized Tooling

One of many greatest causes international AI groups fail is workflow inconsistency. Completely different groups use completely different AI improvement environments, deployment strategies, testing frameworks, and documentation requirements. GCC pods remove this fragmentation by standardizing your complete AI know-how stack throughout offshore and nearshore groups. From MLOps workflows and CI/CD pipelines to AI monitoring and model management, each crew operates inside the identical execution framework.

Unified Dash Planning

Conventional offshore AI groups typically work on disconnected dash cycles that create delays, rework, and supply confusion. GCC pod fashions remedy this by aligning dash planning, supply priorities, and execution timelines throughout all areas. Offshore and nearshore AI groups function with shared roadmaps, synchronized objectives, and steady supply visibility, which improves AI pipeline governance and accelerates manufacturing readiness.

Cross-Useful Collaboration

AI supply can not scale when information engineers, AI builders, DevOps groups, QA specialists, and enterprise stakeholders function in isolation. GCC pods carry cross-functional AI groups right into a single operational construction with shared possession and accountability. This reduces communication gaps, improves decision-making pace, and creates stronger alignment between AI technique, engineering execution, and enterprise outcomes.

Embedded Automation

Guide AI operations decelerate enterprise scalability and enhance manufacturing threat. GCC-backed AI supply fashions combine automation instantly into AI pipelines by means of automated testing, CI/CD for AI fashions, deployment validation, monitoring, and workflow orchestration. Embedded automation reduces human dependency, improves execution consistency, and allows distributed AI groups to ship sooner with out compromising governance or high quality.

Actual-Time Governance Visibility

Most enterprises wrestle with AI governance as a result of management lacks visibility into what is going on throughout distributed groups. GCC pod fashions create centralized governance visibility by means of shared dashboards, workflow monitoring, compliance monitoring, dash reporting, and AI efficiency analytics. Choice-makers acquire real-time perception into supply well being, operational bottlenecks, deployment dangers, and AI workload progress throughout offshore and nearshore operations.

The Operational Stack Behind Scalable AI Supply

- MLOps Automation: Automates AI mannequin coaching, deployment, retraining, and workflow orchestration to enhance scalability, execution consistency, and operational effectivity throughout distributed AI groups.

- CI/CD for AI: Standardizes steady integration and deployment pipelines for AI fashions, enabling sooner releases, secure deployments, and predictable manufacturing supply.

- Governance Checkpoints: Establishes validation controls throughout AI workflows, information utilization, compliance, testing, and deployment to make sure safe and ruled AI operations.

- Shared Observability: Creates centralized visibility into AI pipeline efficiency, system well being, deployment standing, and operational bottlenecks throughout offshore and nearshore groups.

- AI QA Automation: Automates AI testing, mannequin validation, bias detection, and efficiency checks to scale back manufacturing failures and enhance AI supply high quality.

- Model Management Self-discipline: Maintains constant monitoring of AI fashions, datasets, workflows, and code modifications to remove conflicts and enhance reproducibility.

- Documentation Programs: Centralizes operational information, AI workflows, governance requirements, and dash choices to enhance collaboration and long-term scalability.

- AI Monitoring Workflows: Constantly displays mannequin accuracy, drift, system habits, and AI efficiency to take care of manufacturing stability and governance compliance.

Indicators Your AI Supply Mannequin Is Already Breaking

- Dash spillovers have gotten routine, and AI workloads constantly miss manufacturing timelines.

- AI releases really feel unpredictable as a result of deployment high quality, testing requirements, and supply processes differ throughout groups.

- Offshore and nearshore AI groups are utilizing completely different workflows, instruments, and documentation practices with little operational consistency.

- The identical manufacturing bugs, mannequin failures, and AI efficiency points hold resurfacing throughout a number of launch cycles.

- Offshore AI groups rely closely on fixed escalations, approvals, and intervention from inner management to maintain execution transferring.

- AI governance exists in displays and coverage paperwork, however not in precise day-to-day AI operations, workflows, or supply accountability.

How ISHIR’s GCC Pods Assist Enterprises Scale AI Supply with Governance and Agility

ISHIR helps enterprises repair fragmented AI supply by means of GCC-backed Agile Pods designed for scalable AI execution. As an alternative of disconnected offshore assets, ISHIR builds cross-functional AI pods aligned round shared possession, standardized workflows, dash accountability, and enterprise AI governance. This creates sooner AI supply, stronger operational visibility, and predictable execution throughout offshore and nearshore groups with out the coordination chaos that slows most AI initiatives.

By way of its Enterprise AI companies, GCC-on-demand expertise mannequin, and Expertise Accelerator framework, ISHIR allows organizations to scale AI engineering capabilities with out compromising governance or supply high quality. From MLOps automation and AI pipeline governance to AI QA, deployment, and operational help, ISHIR creates AI supply methods which can be constructed for manufacturing scale, enterprise continuity, and long-term enterprise development.

AI tasks don’t fail from lack of innovation. They fail from damaged execution throughout international groups.

FAQs

Q. Why do offshore AI tasks fail throughout manufacturing deployment?

Most offshore AI tasks fail as a result of the supply mannequin is fragmented. Completely different groups use completely different AI workflows, deployment requirements, and governance practices, which creates operational gaps throughout manufacturing scaling. Poor AI pipeline governance, weak dash alignment, and inconsistent MLOps processes result in delayed releases, mannequin instability, and recurring manufacturing failures. Enterprises typically uncover too late that their AI technique lacks execution consistency.

Q. What’s AI pipeline governance and why is it important for enterprise AI?

AI pipeline governance is the framework that standardizes how AI fashions are developed, examined, deployed, monitored, and managed throughout the enterprise AI lifecycle. It helps organizations preserve compliance, enhance AI mannequin reliability, cut back deployment dangers, and create operational consistency throughout international AI groups. With out AI governance frameworks and MLOps self-discipline, enterprises wrestle to scale AI past proof-of-concept environments.

Q. How do GCC pod fashions enhance offshore and nearshore AI supply?

GCC pod fashions exchange siloed outsourcing buildings with built-in AI supply groups that function with shared accountability, standardized workflows, and centralized governance visibility. Offshore and nearshore AI groups work inside the identical dash cycles, AI improvement requirements, and operational frameworks. This improves collaboration, reduces supply friction, and creates predictable enterprise AI execution throughout areas.

Q. What are the largest AI governance challenges for international enterprises?

The most important AI governance challenges embrace inconsistent AI/ML workflows, fragmented tooling, weak documentation, mannequin drift, lack of operational visibility, and poor coordination between distributed AI engineering groups. Many enterprises additionally wrestle with AI compliance, deployment accountability, and real-time monitoring as AI workloads scale globally. Governance turns into considerably tougher when offshore and nearshore groups function with out standardized AI supply methods.

Q. How does MLOps assist scale enterprise AI operations?

MLOps creates standardized workflows for AI mannequin coaching, testing, deployment, monitoring, and retraining throughout the machine studying lifecycle. It combines automation, CI/CD for AI, governance controls, observability, and model administration to enhance AI scalability and manufacturing reliability. Enterprises utilizing MLOps frameworks can cut back deployment delays, enhance AI high quality, and operationalize AI sooner throughout distributed engineering groups.

Q. Why do AI initiatives wrestle to maneuver past proof of idea?

Most AI initiatives fail to scale as a result of enterprises focus closely on fashions whereas ignoring operational execution. AI pilots typically achieve managed environments, however manufacturing AI requires governance, automation, monitoring, standardized workflows, and cross-functional collaboration. With no scalable AI working mannequin, organizations face deployment bottlenecks, inconsistent supply high quality, and rising operational complexity.

Q. What’s the distinction between conventional AI outsourcing and GCC-based AI operations?

Conventional AI outsourcing focuses on useful resource augmentation and task-based execution, whereas GCC-based AI operations give attention to built-in supply possession, governance, and long-term scalability. GCC pod fashions create centralized AI governance, shared dash accountability, standardized MLOps workflows, and operational visibility throughout offshore and nearshore groups. This makes AI supply sooner, extra predictable, and simpler to scale on the enterprise stage.

Q. How can enterprises enhance AI supply consistency throughout international groups?

Enterprises can enhance AI supply consistency by standardizing AI improvement workflows, implementing MLOps automation, centralizing governance visibility, and aligning offshore and nearshore groups by means of GCC pod buildings. Sturdy AI pipeline governance ensures each crew follows the identical deployment requirements, dash planning course of, monitoring workflows, and operational controls. Consistency in execution is what in the end allows scalable enterprise AI supply.